In my recent post, How I Am Running Sites in 2026, I detailed my current stack centered on Astro and Cloudflare. A common question I’ve received is how this modern, agent-heavy approach reconciles with the CI/CD pipelines we’ve relied on for years.

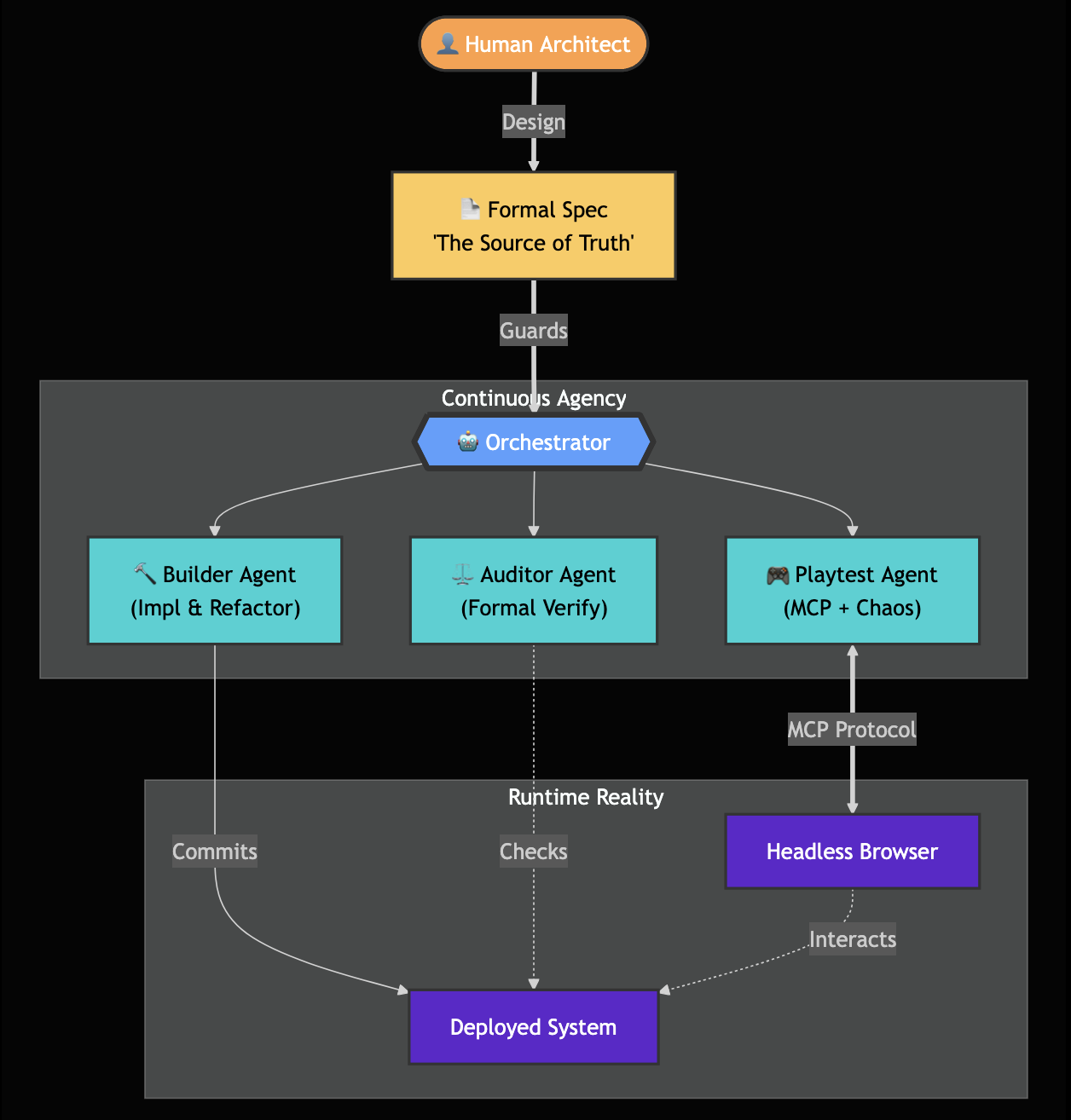

The answer is simple: Agents don’t replace CI/CD—they augment it. I’m moving toward a paradigm of Continuous Agency. My CI/CD pipelines continue to handle the deterministic “heavy lifting”—building, linting, and deployment—where predictability is a requirement. I’m now layering specialized AI agents on top to handle the continuous exploration, validation, and maintenance tasks that traditional static pipelines simply cannot reach.

The Shift: From Pipelines to Perpetual Agency

Traditional CI/CD is great for consistency, but it hits a wall once systems reach a certain level of complexity. Static YAML pipelines aren’t built to reason about state—they’re built to follow instructions. Continuous Agency empowers intelligent agents to reason about state and act autonomously within that infrastructure.

graph LR

Spec[Formal Spec] --> Orchestrator

Orchestrator --> CodeAgent[Code Gen Agent]

Orchestrator --> Verifier[Verification Agent]

Orchestrator --> Playtester[Playtest Agent]

CodeAgent --> GitHub[GitHub PR]

GitHub --> Cloudflare[Cloudflare Deployment]

Playtester <-->|CDP/MCP| Browser[Chrome/Browser Engine]

Browser <-->|Sync State| GameServer[Multiplayer Server]

My role is shifting from writing boilerplate to designing systems and refining specifications. The agent team handles the implementation, verification, and exploratory testing.

Formal Specifications as Executable Truth

Natural language is notoriously ambiguous—the root of most software friction. By moving toward formal specifications, I treat the design as the “source of truth.”

- The Design Agent: Refines initial feature ideas into structured, machine-readable specifications.

- The Code Generation Agent: Transforms those specifications into declarative code.

- The Verification Agent: Validates that the code adheres to the spec, utilizing property-based testing and model checking.

Accelerated Agentic Playtesting

The video above is intentionally human-paced and renders every move so the state transitions are visible, which makes it look slower than the underlying agent loop. In headless mode, the LLM can drive the same workflow directly via programmatic debug/MCP hooks (without rendering the UI), and the same end-to-end sweep typically runs in about 1-2 minutes in real time instead of the multi-minute demo pacing.

For complex systems, verification requires more than static checks. I’m developing Accelerated Agentic Playtesting to validate user experience and game logic in ways that traditional unit tests cannot. I built this agent specifically to stress-test the state-management logic in my current project, Stellar Whiskers, an upcoming feature that is being playtested at [https://www.stellarwhiskers.com/rocket-reversi].

By leveraging the Chrome DevTools Protocol (CDP) and Model Context Protocol (MCP), I can run agents that:

- Understand Internal State: They bypass the UI to query the game state directly via internal browser windows.

- Execute Intelligent Exploration: They don’t follow static scripts; they have high-level goals—like finding race conditions in multiplayer sync.

- Control Time & Network: They can throttle connections or “time-jump” to stress-test synchronization logic at speeds impossible for a human tester.

sequenceDiagram

participant AgentA as Agent A (Attacker)

participant AgentB as Agent B (Defender)

participant BrowserA as Browser Instance A

participant BrowserB as Browser Instance B

AgentA->>BrowserA: CDP.SendCommand: 'Input.dispatchMouseEvent'

BrowserA->>GameServer: WebSocket.Send: 'Action:Fire'

GameServer-->>BrowserB: WebSocket.Receive: 'StateUpdate:Hit'

AgentB->>BrowserB: MCP.Query: 'GameState.Health'

AgentB->>AgentA: Report: 'Desync detected'

Technical Note: The Reality of State-Sync Dealing with ghost item exploits is the classic “distributed systems nightmare”: a TOCTOU (Time-of-Check to Time-of-Use) race condition where the client-side prediction and the authoritative server fall out of sync. If the game’s state isn’t perfectly transactional, a dropped packet or a weirdly timed command creates a causality gap. Traditional unit tests are useless here because they can’t simulate the interleaving of events across network boundaries. That’s why I’m using MCP-based agents to fuzz these state transitions—I’m testing the engine’s actual causality, not just the API endpoints.

Why This Matters

This isn’t about discarding proven tools like CI/CD; it’s about scaling human ingenuity. By formalizing my process and layering agency on top of my existing infrastructure, I gain:

- Increased Leverage: I spend my time on high-level design and critical architectural review, while the agents handle the exhaustive state-checking.

- Improved Reliability: We can perform state-validation that would be prohibitively expensive to script manually.

- Accelerated Innovation: I move from concept to verified, deployed feature in a fraction of the time, without compromising the deterministic safety of my pipelines.

The goal remains what it has always been: building reliable, privacy-focused systems that scale. Agency just happens to be the most effective tool in the kit right now.