Last year, I wrote about my 2025 hosting stack, which centered on Astro, Azure Blob Storage, and Cloudflare Workers. That setup served me well, providing a fast, scalable, and cost-effective solution. But as the landscape of software development evolves, so does my approach. For 2026, I’m embracing a more fundamental shift: moving from a workflow of continuous integration to one of continuous agency.

My core focus is no longer just on automating deployment, but on automating development itself. I’m building a system where I translate ideas into detailed system designs and formal specifications, and then empower autonomous agents to write, verify, and deploy the code.

The Vision: Specification-Driven Engineering

The central idea is to elevate my role. While I still write code, I spend significantly more time iterating on system design, formalizing specifications, and performing code reviews. My development workflow is a partnership between myself and a team of AI agents.

It works like this:

- In-Editor Agent: An agent within my Zed editor acts as a pair programmer, helping me scaffold code from specs or tackle implementation details.

- Autonomous Agents: A team of autonomous agents can be prompted to take on larger tasks, or they can run on their own cycles, performing maintenance or implementing new features. They open pull requests for my review, just like a human team member.

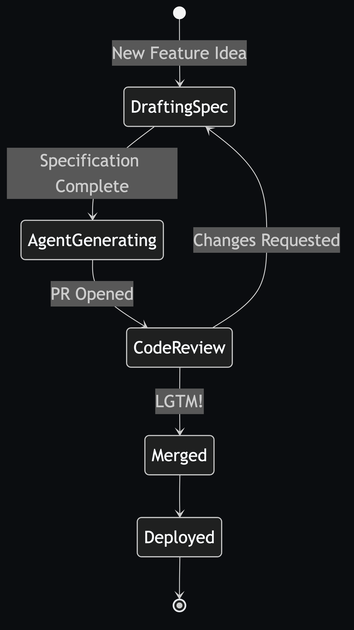

A particularly powerful technique in this workflow is turning visual diagrams directly into code. For example, a Mermaid flowchart that describes application logic can be parsed and transformed into a fully-functional state machine. The specification becomes the source of truth in a very literal sense.

stateDiagram-v2

[*] --> DraftingSpec : New Feature Idea

DraftingSpec --> AgentGenerating : Specification Complete

AgentGenerating --> CodeReview : PR Opened

CodeReview --> Merged : LGTM!

CodeReview --> DraftingSpec : Changes Requested

Merged --> Deployed

Deployed --> [*]

I want to keep pushing this toward declarative, deterministic code generated by trusted tools. The idea is that a diagram like this is a spec, and the generated code becomes a predictable, testable artifact. Here’s the kind of TypeScript I’d want from an island component, emitted directly from that spec:

import { useMemo, useReducer } from "react";

type State =

| "DraftingSpec"

| "AgentGenerating"

| "CodeReview"

| "Merged"

| "Deployed";

type Event =

| { type: "SPEC_DONE" }

| { type: "PR_OPENED" }

| { type: "REQUEST_CHANGES" }

| { type: "APPROVE" }

| { type: "DEPLOY" };

const transitions: Record<State, Partial<Record<Event["type"], State>>> = {

DraftingSpec: { SPEC_DONE: "AgentGenerating" },

AgentGenerating: { PR_OPENED: "CodeReview" },

CodeReview: { REQUEST_CHANGES: "DraftingSpec", APPROVE: "Merged" },

Merged: { DEPLOY: "Deployed" },

Deployed: {},

};

function reducer(state: State, event: Event): State {

return transitions[state][event.type] ?? state;

}

export function WorkflowIsland() {

const [state, dispatch] = useReducer(reducer, "DraftingSpec");

const nextActions = useMemo(

() => Object.keys(transitions[state]) as Event["type"][],

[state],

);

return (

<div className="rounded-xl border border-slate-200 bg-white p-4 shadow-sm">

<p className="text-sm uppercase tracking-wide text-slate-500">

State

</p>

<p className="text-lg font-semibold text-slate-900">{state}</p>

<div className="mt-4 flex flex-wrap gap-2">

{nextActions.map((type) => (

<button

key={type}

type="button"

onClick={() => dispatch({ type })}

className="rounded-md border border-slate-200 px-3 py-1.5 text-sm text-slate-700 hover:bg-slate-50"

>

{type}

</button>

))}

</div>

</div>

);

}

The goal is to have a system that can take a high-level requirement, translate it into a formal specification, and then generate, verify, and deploy the resulting code.

A Declarative Approach to Agent Tooling

A critical piece of this puzzle is how agents interact with the world. Instead of hard-coding tools, I’m using a declarative approach to generate them dynamically. For example, by inspecting an API definition like a boto3 client in Python, I can automatically generate a suite of tools that an agent can use to interact with AWS services.

This means the agent’s capabilities are not fixed but can expand based on the specifications of the systems it needs to manage. It’s a powerful way to ensure agents have the right tools for the job, generated directly from the source of truth—the service definition itself.

The 2026 Stack: Simpler, Faster, and More Flexible

With this new workflow, my hosting stack has also evolved. While I can still leverage Azure, GCP, or AWS for heavy-lifting tasks, they are no longer on the critical path for every project.

My default stack for 2026 is simpler and more integrated:

- Framework: Astro remains my go-to for its performance and “islands architecture,” which aligns perfectly with a component-based, spec-driven approach.

- Hosting & Compute: Cloudflare Pages & Workers are now the foundation. They provide a seamless platform for deploying static sites and running the autonomous agents that manage them. This removes the need for a separate object storage provider like Azure Blob Storage for most of my use cases.

This streamlined stack reduces complexity and operational overhead, allowing me to focus more on the specifications and the agents that implement them.

Influences and Direction

This workflow is informed by several key developments and philosophies. A major practical catalyst has been tighter integration between Astro-based content workflows and Cloudflare-hosted automation, where the same edge platform can receive GitHub events, run agent orchestration, and post back to pull requests.

Philosophically, this approach is about a gradual shift from probabilistic code to verified code. I often start with LLM-generated code to quickly prototype an idea, but the long-term goal is to build trusted, declarative tools that generate and verify code. This methodology is heavily inspired by the rigor of standards bodies like the IETF and formal verification systems like Lean and seL4. It also draws from experiments like my Type-Safe Comms Crate (TSCC) experiment—a project from a Symbolica hackathon to verify browser code against web standards using lightweight verification methods like Algebraic Data Types (ADTs) and Dependent Types—which I plan to write more about in the future.

How It Works in Practice

The current Spec Orchestrator flow is no longer hypothetical; it’s event-driven and branch-aware:

- Webhook Ingestion: A Cloudflare Worker receives

pull_request,push, andissue_commentevents from GitHub and verifies signatures. - Branch-Aware Routing: The hub resolves source/base branches, ignores

agent/*loops, and can run on normal pushes even whenspecs/*did not change. - Run Modes: The hub chooses

review,implement, orrefinemode. Spec-only updates can enter implement mode, while mixed code changes stay in review mode. - Implementation Gate: For spec-only pushes, implementation is gated by explicit maintainer intent (label or command), keeping unwanted autonomous edits out of active branches.

- Comment-First Review: The agent posts plan and review artifacts as PR comments with stable markers, instead of polluting repository history with run-artifact files.

- Inline Mergeable Suggestions: When anchors are available, the agent emits GitHub

suggestionblocks directly on changed lines; when anchors fail, it falls back to summary comments. - Outcome Scorecard: Reviews now include weighted subscores (

readability,correctness,safety,seo_ai) and an explicit impact score formula tuned per repository profile. - Scoped Code Commits: For deterministic tasks, the agent can commit small implementation patches to an agent branch and open or reuse a PR against the working branch.

Why This Change?

This isn’t just about automation; it’s about scaling myself. By formalizing my development process into a series of specifications, I can:

- Increase my leverage: I can oversee more projects and features because my primary output is high-level design, not low-level code.

- Improve consistency: Agents build code based on templates and rules defined in the spec, leading to a more uniform and maintainable codebase.

- Enforce quality: The verification step ensures that every change, whether human- or AI-written, adheres to the system’s design principles.

The shift to agent-driven engineering is an exciting new chapter. It’s a move towards a future where developers can build faster, more reliably, and at a much larger scale, by treating software creation as a formal system of specifications and automated execution.

FAQ

What changed in this update?

The workflow moved from a spec-triggered prototype to a branch-aware PR agent with explicit run modes, implementation gating, and inline GitHub suggestions.

Why does this matter now?

It makes the system safer and more useful in day-to-day development: better signal-to-noise in PRs, fewer accidental agent edits, and more actionable review output.

What should readers do next?

If you’re building something similar, start with comment-first reviews, add branch/risk gates before auto-commit behavior, and instrument a repo-specific scorecard so quality trends are visible.

Key Takeaways

- Triggering on both PR and push events gives better coverage than spec-file changes alone.

- Comment-first artifacts plus inline

suggestionblocks keep collaboration high-signal and easy to merge. - Outcome scoring is more credible when it is weighted by repo goals instead of generic one-size-fits-all metrics.