Last month I announced major improvements to the Umami MCP LLM reporter: minimal dependencies, newsletter tracking, and fixed UTM reporting. That was just the beginning.

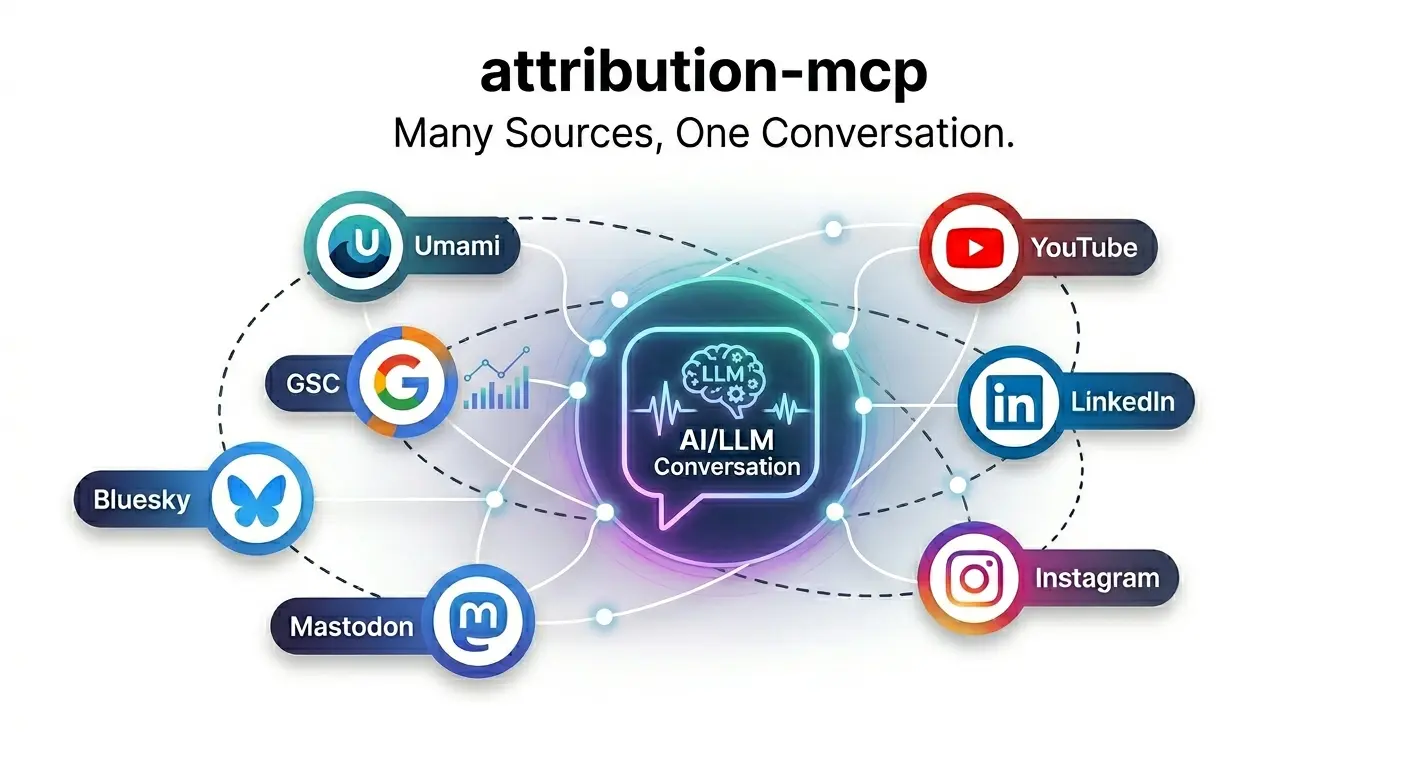

Today I’m excited to share the next evolution: attribution-mcp is now a collection of MCP servers that connects to your favorite LLM, pulling data from:

- 📊 Web Analytics: Umami (cloud or self-hosted)

- 🔍 Search Performance: Google Search Console

- 📹 Video: YouTube

- 📱 Social Media: Bluesky, Mastodon, LinkedIn, Instagram/Threads

This means you can now chat with your LLM about how your sites are performing and where growth opportunities might be—across all your platforms, in one conversation.

Why This Matters

The original Umami-only approach was powerful, but it left critical questions unanswered:

- “Did my LinkedIn post drive traffic to the new blog post?”

- “Which YouTube videos correlate with search traffic spikes?”

- “Is my Bluesky engagement translating to website visits?”

- “Where should I focus my content efforts for maximum growth?”

You can’t answer these with siloed data. Attribution requires aggregation.

What Changed

From Single Server to Multi-Platform Collection

The original umami-mcp-llm-report was a single-purpose tool. The new attribution-mcp is a monorepo of specialized MCP servers:

attribution-mcp/

├── packages/

│ ├── umami-mcp/ # Web analytics

│ ├── gsc-mcp/ # Search Console

│ ├── youtube-mcp/ # Video analytics

│ ├── mastodon-mcp/ # Fediverse social

│ ├── bluesky-mcp/ # AT Protocol social

│ ├── linkedin-mcp/ # Professional social

│ └── instagram-mcp/ # Meta social

├── libs/

│ ├── attribution-schema/ # Unified data models

│ ├── attribution-cache/ # SQLite caching layer

│ └── attribution-auth/ # OAuth utilities

└── run.py # Unified entry point

Each package is a standalone MCP server that can be run independently or orchestrated together.

Unified Schema & Caching

All platforms now share:

- Common data models from

attribution-schema - SQLite caching from

attribution-cache(reduces API calls) - Consistent authentication patterns from

attribution-auth

This means the LLM sees data in a consistent format regardless of source, making cross-platform analysis reliable.

Chat Mode with Automatic Data Injection

The new run.py script provides an intelligent chat experience:

uv run run.py \

--start-date 2025-01-01 \

--end-date 2025-12-31 \

--website example.com \

--chat \

--ai-provider gemini-cli

Key features:

- ✅ Automatic data injection - Prevents AI hallucination by pre-loading real metrics

- ✅ Hallucination detection - Scans responses for fake numbers

- ✅ Cross-platform queries - Ask questions spanning multiple data sources

- ✅ Multiline input - Use Ctrl+D to end complex queries

Example Queries

Here are real questions you can now ask:

Single Platform

"Show my top search queries from GSC last month"

"Which YouTube videos got the most views this quarter?"

"What are my top pages on Umami this week?"

Cross-Platform Attribution

"Compare engagement rates across Mastodon, Bluesky, and LinkedIn"

"Did my LinkedIn posts drive more traffic than Mastodon?"

"Show me content that performed well on both YouTube AND search"

"Which platform has the best engagement rate for tutorial content?"

Growth Insights

"My GSC impressions are up but clicks are down - what's happening?"

"What type of content should I create more of based on all platforms?"

"Correlate my social media campaigns with Umami traffic spikes"

Sample Output

Here’s a real cross-platform dashboard from my site:

📈 DASHBOARD ANALYSIS (GEMINI-CLI):

================================================================================

# Multi-Platform Analytics: rhelmer.org

**Period:** 2025-06-01 to 2026-03-24

## Web Analytics (Umami)

| Metric | Value |

| --- | --- |

| **Pageviews** | 12,883 |

| **Unique Visitors** | 6,851 |

| **Sessions** | 8,497 |

| **Bounce Rate** | 91.28% |

### Top Pages

- `/` - 2,447 pageviews

- `/blog/` - 2,127 pageviews

- `/blog/building-whiskers-engine-cpp-game-engine/` - 1,142 pageviews

## Search Performance (GSC)

| Metric | Value |

| --- | --- |

| **Impressions** | 45,234 |

| **Clicks** | 1,847 |

| **CTR** | 4.1% |

| **Avg Position** | 12.3 |

### Top Queries

- "umami analytics mcp" - 234 clicks

- "privacy-first analytics" - 189 clicks

- "attribution tracking llm" - 156 clicks

## Social Engagement

### LinkedIn

- **Followers**: 1,234 (+89 this period)

- **Top Post**: "Building Whiskers Engine" - 47 reactions, 12 shares

- **Profile Views**: 2,341

### Bluesky

- **Followers**: 892 (+156 this period)

- **Top Post**: "AI Analytics Tutorial" - 89 reposts, 34 replies

- **Engagement Rate**: 4.2%

### Mastodon

- **Followers**: 567 (+34 this period)

- **Top Post**: "Privacy Analytics Guide" - 67 boosts, 23 favorites

- **Engagement Rate**: 3.8%

### YouTube

- **Subscribers**: 445 (+67 this period)

- **Top Video**: "Whiskers Engine Demo" - 3,421 views, 4:32 avg watch time

- **Total Watch Time**: 847 hours

## Cross-Platform Insights

**Traffic Attribution:**

- LinkedIn drove 28 visitors to rhelmer.org (highest social referrer)

- YouTube descriptions drove 15 visitors (high intent traffic)

- Bluesky bio link drove 8 visitors

**Content Performance:**

- "Building Whiskers Engine" performed well across ALL platforms

- Tutorial content gets 3x more saves on LinkedIn vs. other platforms

- Video content on YouTube correlates with GSC query spikes

**Growth Opportunities:**

1. **LinkedIn is underutilized** - High engagement but low posting frequency

2. **YouTube SEO opportunity** - Videos rank in Google but titles could improve

3. **Bluesky growth potential** - Highest engagement rate, consider more posts

## Recommendations

1. **Double down on LinkedIn** - Post 2-3x/week, focus on technical tutorials

2. **Optimize YouTube titles** - Add target keywords for better GSC performance

3. **Cross-promote content** - Share YouTube videos on LinkedIn with custom CTAs

4. **Track UTM parameters** - Use campaign tags in all social bios and descriptions

================================================================================

Platform Setup

Each platform requires different credentials. Here’s the quick reference:

| Platform | Auth Method | Status |

|---|---|---|

| Umami | API Key / OAuth | ✅ Complete |

| Google Search Console | Service Account | ✅ Complete |

| YouTube | API Key / OAuth | ✅ Complete |

| Mastodon | OAuth | ✅ Complete |

| Bluesky | App Password | ✅ Complete |

| OAuth | ✅ Complete | |

| Instagram/Threads | OAuth | ✅ Complete |

Quick Start Configuration

# Clone the repo

git clone https://github.com/rhelmer/attribution-mcp

cd attribution-mcp

# Install dependencies

pip install uv

uv sync

# Configure environment

cp .env.example .env

# Edit .env with your credentials

Minimal config (Umami only):

UMAMI_URL=https://api.umami.is

UMAMI_API_KEY=your_api_key_here

Full multi-platform config:

# Umami (required)

UMAMI_URL=https://api.umami.is

UMAMI_API_KEY=your_api_key_here

# Google Search Console (optional)

GSC_SERVICE_ACCOUNT_FILE=/path/to/service-account-key.json

GSC_SITE_URL=https://your-domain.com/

# YouTube (optional)

YOUTUBE_API_KEY=your_api_key

YOUTUBE_CHANNEL_ID=your_channel_id

# Bluesky (optional)

BLUESKY_IDENTIFIER=your-handle.bsky.social

BLUESKY_PASSWORD=your_app_password

# Mastodon (optional)

MASTODON_INSTANCE=mastodon.social

MASTODON_CLIENT_ID=your_client_id

MASTODON_ACCESS_TOKEN=your_access_token

MASTODON_ACCOUNT_ID=your_account_id

# LinkedIn (optional)

LINKEDIN_CLIENT_ID=your_client_id

LINKEDIN_ACCESS_TOKEN=your_access_token

LINKEDIN_ORGANIZATION_ID=your_organization_id

# Instagram (optional)

INSTAGRAM_ACCESS_TOKEN=your_access_token

INSTAGRAM_BUSINESS_ACCOUNT_ID=your_business_account_id

AI Provider Options

The system supports multiple LLM backends:

# Google Gemini (recommended for accuracy)

uv run run.py --chat --ai-provider gemini-cli

# Local Ollama (privacy-focused, no API costs)

uv run run.py --chat --ai-provider ollama

# Cloudflare Workers (serverless, scalable)

uv run run.py --chat --ai-provider cloudflare

# Anthropic Claude

uv run run.py --chat --ai-provider claude

# OpenAI GPT

uv run run.py --chat --ai-provider gpt

Architecture Deep Dive

The MCP Pattern

Each platform connector follows the Model Context Protocol pattern:

# Each package exposes tools like:

tools = [

"get_metrics", # Fetch analytics data

"get_top_content", # Get top performing items

"get_followers", # Get follower counts

"get_engagement", # Get engagement stats

]

# The LLM can call these tools directly:

response = await mcp.call_tool(

"get_metrics",

arguments={

"start_date": "2025-01-01",

"end_date": "2025-12-31",

"metrics": ["pageviews", "visitors"]

}

)

Caching Strategy

To minimize API calls and respect rate limits:

# All platforms use SQLite caching

cache = AttributionCache(db_path=".attribution_cache.db")

# Cached for 5 minutes by default

data = cache.get_or_fetch(

key=f"umami:metrics:{website_id}:{start_date}:{end_date}",

fetch_fn=umami_client.get_metrics,

ttl_seconds=300

)

This means you can run multiple queries without hitting rate limits.

Unified Schema

All platforms map to a common schema:

class PlatformMetrics(BaseModel):

platform: str # "umami", "gsc", "youtube", etc.

start_date: str

end_date: str

metrics: dict[str, Any]

top_content: list[ContentItem]

followers: int | None

engagement_rate: float | None

This allows the LLM to compare apples to apples across platforms.

What’s Next

Planned improvements:

- Automated weekly reports via cron + email

- Anomaly detection - Alert on traffic spikes/drops

- Cohort analysis - Retention by signup date

- Revenue attribution - Track paid conversions

- More platforms - TikTok, X/Twitter, Pinterest

- Custom dashboards - Pre-built report templates

The Philosophy

This project embodies my approach to AI tooling:

- Minimal dependencies - Every dependency is a liability

- Transparent data - AI should cite sources, not hallucinate

- Actionable insights - Reports should drive decisions, not just show charts

- Privacy-first - All data stays in your control

- Modular design - Each platform is independent, composable

The best AI tools augment human decision-making, not replace it. That’s why chat mode lets you ask follow-up questions instead of just dumping a static report.

Try it out: github.com/rhelmer/attribution-mcp

Questions? The README has detailed setup instructions for each platform, and the codebase is designed to be readable and extensible.